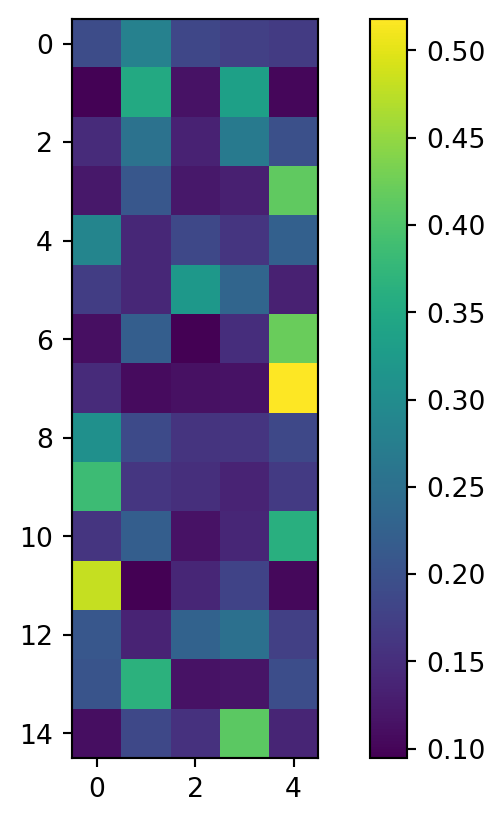

Document-topic matrix:

[-1, 30, -1, -1, 24, -1, 1, 5, -1, -1, -1, -1, -1, 5, 0, 8, -1, 5, 9, 10, 9, 3, -1, 30, -1, -1, -1, -1, 19, -1, -1, 8, 8, -1, -1, -1, -1, 9, 1, 9, 30, 5, -1, 29, 12, -1, -1, -1, 1, -1, 8, 3, 1, 17, -1, 10, 4, 10, -1, 1, 1, 18, -1, 19, -1, 11, 3, 8, 0, 18, 18, 18, 30, 17, -1, -1, 18, 2, 9, 8, 30, 9, 18, 8, -1, 0, -1, 9, 5, 5, 28, 8, -1, -1, 2, -1, 19, -1, -1, 0, 18, -1, -1, -1, -1, 23, 8, 17, 18, -1, -1, -1, 9, -1, 9, 1, 30, 5, -1, 5, 28, -1, 2, 8, -1, -1, 8, 8, 9, -1, -1, -1, 12, -1, -1, 16, 8, -1, 24, -1, 6, -1, 9, 22, 10, -1, 10, 6, 9, -1, -1, 3, -1, -1, -1, 9, -1, -1, 3, -1, 10, 0, 0, 0, 23, 0, 1, -1, 24, -1, -1, 27, 4, 3, -1, -1, 27, 24, -1, 5, 9, -1, -1, 0, 1, -1, 18, 8, -1, 1, 28, -1, 9, 13, -1, -1, -1, 5, -1, 21, 17, 1, 18, 27, 1, 8, 9, 1, -1, -1, 0, 9, 2, -1, -1, 1, -1, -1, -1, -1, 24, 14, -1, -1, 5, 23, 6, 5, 1, -1, -1, 3, -1, -1, -1, 27, 17, -1, -1, -1, -1, 1, -1, 18, -1, -1, 1, 10, 10, -1, -1, -1, -1, 10, 9, 8, 1, 17, 23, 17, 29, -1, -1, 1, 5, -1, 5, -1, -1, -1, -1, -1, 13, 19, 17, -1, -1, -1, -1, -1, 29, 30, -1, 18, 1, 10, -1, 5, 27, 3, 9, 27, -1, 10, 9, -1, -1, 0, 0, -1, -1, -1, 29, 9, -1, -1, -1, 16, 5, 16, 1, 1, 27, -1, 1, 20, 3, -1, -1, 23, 26, 27, -1, 3, 8, -1, -1, 10, 20, -1, 11, 19, 11, 11, -1, -1, -1, 3, 17, 0, 10, 0, 6, 23, 3, 5, -1, 11, 12, -1, -1, 0, -1, 5, 28, -1, -1, -1, -1, -1, 16, -1, 13, 0, -1, 8, -1, 10, 26, -1, 5, 0, 3, -1, 10, 10, 20, 11, 10, -1, 10, -1, 6, 0, 24, 26, -1, 3, 11, 26, -1, 4, 0, 0, 8, -1, -1, 8, -1, 23, -1, -1, -1, 3, -1, 13, 18, -1, -1, 19, 8, 6, 27, -1, -1, -1, 11, 10, 5, -1, -1, -1, -1, -1, -1, 6, 8, 21, -1, -1, -1, 11, 24, -1, -1, -1, -1, 30, 8, 4, 5, 5, 2, 5, 11, -1, -1, -1, -1, -1, 4, -1, -1, 30, -1, -1, 18, -1, 0, -1, 0, 20, 17, 6, 1, 17, -1, 27, 6, 3, -1, -1, 11, 25, 2, -1, 17, 16, 24, 20, -1, -1, -1, -1, -1, -1, 11, -1, 11, 11, -1, 5, 10, -1, 0, -1, -1, 24, 10, -1, -1, -1, 25, -1, -1, 24, 11, 5, -1, 0, -1, 10, -1, 18, 4, 20, 8, 18, -1, -1, -1, 2, -1, -1, 10, -1, 3, 5, 1, -1, 16, 7, 12, -1, 3, -1, 6, 27, 23, 24, 11, 16, -1, 10, -1, 8, 12, 8, 2, 8, -1, 4, 11, 8, -1, 8, 1, 16, -1, 16, -1, -1, 9, -1, 11, 0, 3, -1, -1, -1, -1, 1, 3, 1, 16, -1, -1, 13, 0, -1, 6, 1, -1, 20, 24, 24, 29, -1, -1, -1, 1, 30, -1, 0, -1, 4, 16, 20, 28, 0, 19, -1, -1, -1, 2, -1, 13, -1, -1, 13, -1, 30, 25, 0, -1, -1, 0, 2, 30, 0, 26, -1, 17, 4, -1, 11, -1, 17, 20, 10, 8, 20, -1, 3, 19, 5, -1, 20, -1, -1, 13, -1, 2, 3, -1, 0, 7, 12, 12, 12, 12, 12, 12, 12, -1, 12, 12, 12, 12, 2, 3, -1, 6, 11, -1, -1, 29, -1, 29, 29, 9, -1, 0, 29, 26, -1, 0, -1, -1, 5, 10, -1, 10, -1, 0, -1, -1, 1, -1, -1, 6, 1, 19, 0, 16, -1, -1, -1, -1, 18, 13, -1, 16, 13, 4, 18, -1, -1, 20, 13, 22, -1, 3, 7, 2, 7, 7, 2, 7, 2, 7, 12, 5, 0, 17, 3, 6, 4, 5, -1, -1, 0, 9, -1, -1, 3, -1, -1, 2, -1, 4, 6, 4, 2, 4, 4, 4, 4, 4, 4, 4, 4, 4, -1, 1, 4, 16, 4, 4, 26, 0, 11, 18, -1, -1, 23, 0, -1, -1, 3, -1, -1, -1, 7, -1, -1, 7, -1, 20, -1, -1, 1, 28, -1, 25, 7, 25, 26, -1, -1, 0, 6, 16, 3, 7, -1, 20, -1, -1, 22, -1, 30, 16, -1, 16, 26, -1, -1, 12, 6, 6, 9, -1, -1, -1, 27, 9, -1, 17, 17, -1, 1, 1, 17, -1, -1, 17, 17, -1, 17, 20, 19, 22, 6, 0, -1, 4, -1, -1, -1, 0, 9, 16, -1, 7, 8, 2, 29, -1, -1, 4, 12, 14, 0, 23, 7, -1, 6, 13, -1, 13, -1, 13, 13, 13, 13, 13, -1, -1, -1, 4, 5, 19, 1, 19, 5, -1, -1, 26, 13, -1, 12, 12, 12, 12, 12, 12, 12, -1, 12, 11, 7, 13, 22, 15, 6, 0, 2, 10, -1, -1, 0, -1, -1, -1, -1, -1, -1, 21, 4, -1, 24, 20, -1, 0, -1, 20, -1, 25, -1, -1, 26, -1, -1, -1, 19, -1, -1, 0, 19, -1, 25, 0, 20, 6, -1, 31, -1, 3, 7, -1, -1, -1, 18, -1, 4, 29, 19, -1, -1, 6, -1, -1, -1, 21, 1, 3, 1, 20, 1, 1, -1, 13, 1, -1, 23, 11, 2, 25, -1, -1, 9, 2, -1, 5, -1, 22, 28, 0, -1, 0, -1, 1, 1, 1, 1, 1, 1, 1, 1, -1, 2, -1, -1, 25, -1, 25, -1, -1, 2, -1, 31, 11, 21, 7, -1, 7, 5, -1, 25, -1, -1, 6, 10, 22, -1, 26, -1, 21, 3, -1, -1, -1, -1, -1, 25, 2, -1, -1, -1, -1, 6, -1, -1, -1, 2, 2, 2, 2, 0, 2, -1, 23, 26, -1, -1, -1, 4, 6, 7, 23, 8, -1, -1, -1, 0, -1, 3, -1, 21, 2, 2, -1, 7, 7, 7, -1, -1, -1, 17, 7, 7, -1, 7, -1, 29, -1, 0, 16, -1, -1, -1, -1, 21, 0, -1, -1, -1, 7, -1, 7, -1, 6, 11, 31, 15, 31, 11, 31, 31, -1, -1, -1, 9, 11, 28, -1, -1, -1, -1, 0, -1, 0, -1, 2, 0, 25, 0, 28, -1, 0, -1, 0, 15, -1, 2, 29, -1, -1, 28, -1, -1, 2, -1, -1, 2, -1, 21, 13, 4, -1, 4, -1, -1, -1, -1, -1, 2, 2, -1, 7, 7, 6, 25, -1, -1, -1, -1, 9, 21, 14, 7, 22, -1, 3, 3, 3, 3, -1, 6, 19, 3, 22, -1, 4, -1, -1, -1, 22, 22, 19, 1, 1, -1, -1, -1, 31, -1, 23, 31, -1, 16, -1, -1, 22, -1, 13, 21, -1, -1, 7, 14, -1, 21, 21, -1, -1, 7, 21, -1, 22, 21, -1, 7, -1, 24, -1, -1, -1, -1, 23, -1, 16, -1, -1, -1, 4, -1, 16, 6, -1, 2, -1, 11, 21, 2, 6, 21, 23, 2, 2, 31, -1, -1, -1, -1, -1, 6, -1, 2, 7, 21, -1, 2, 6, -1, -1, 6, 28, -1, -1, -1, -1, 14, -1, -1, -1, -1, 14, -1, -1, 14, -1, 14, 13, 22, 5, -1, -1, -1, -1, 7, -1, -1, -1, 19, -1, -1, 9, -1, 15, -1, 28, 15, 13, 14, -1, 14, 8, 9, 14, 15, -1, 14, -1, 14, -1, 31, 14, 14, 9, 14, 6, -1, -1, -1, 14, 14, -1, 14, -1, 27, 14, 14, 14, 16, 14, 14, 14, 3, 15, 15, 28, 15, -1, -1, 15, 15, 15, 15, -1, -1, 15, 15, 15, 15, 15, 15, 15, -1, 15, 19, -1, 15, 15, 22, 22, 22]